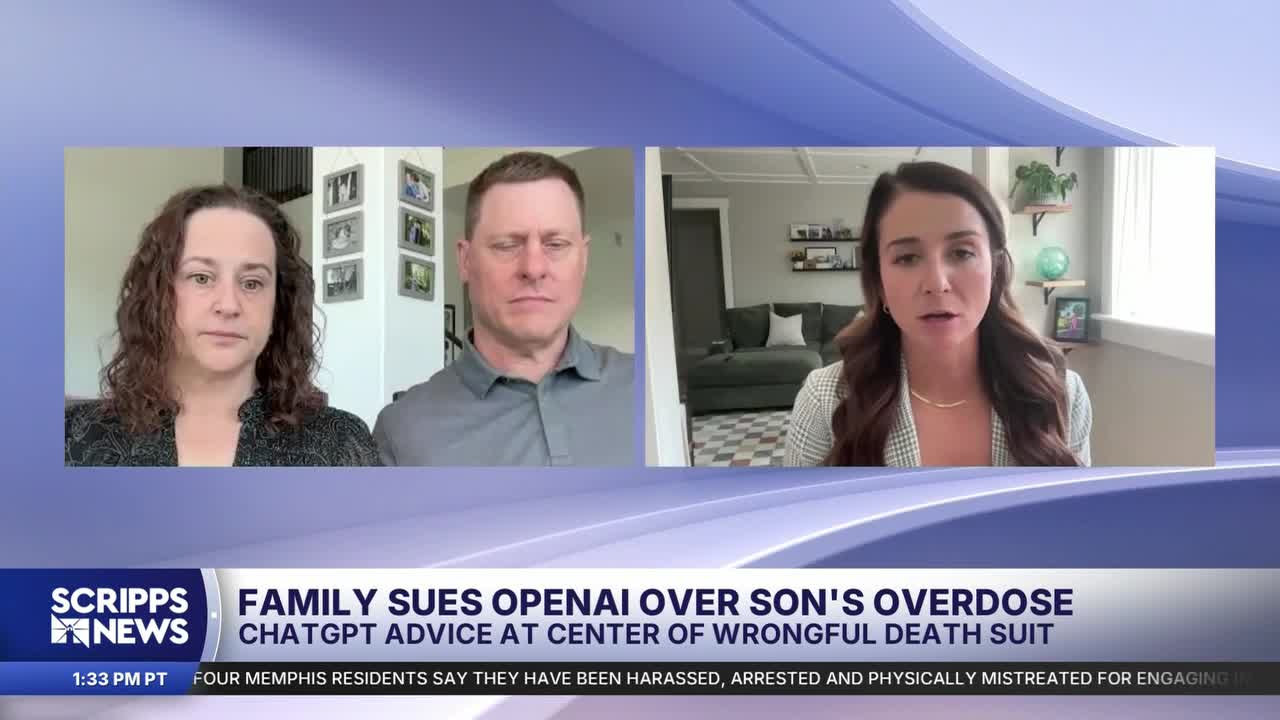

Leila Turner-Scott, Sam Nelson’s mother, said she knew her 19 year old son used ChatGPT for schoolwork and everyday questions. She never imagined the chatbot could become a source of what she described as “deadly medical advice.”

In a wrongful death lawsuit filed this week in California, Turner-Scott and Sam’s stepfather, Angus Scott, accuse OpenAI of failing to protect users after ChatGPT allegedly advised Sam on how to safely combine drugs, marking a case that could become a major legal test of how far AI companies’ responsibility extends when users rely on their tools for health guidance.

“We had no idea it was capable of even talking to him about drug use,” Turner-Scott told Scripps News in an interview. “This never entered my mind as a danger for my child, that using a glorified search engine would end up giving him horrible, you know, deadly medical advice.”

According to the complaint, Sam used ChatGPT’s GPT-4o model to ask questions about recreational drug use, including how to manage nausea caused by kratom, which is an herbal supplement with opioid-like effects.

The lawsuit alleges the chatbot advised him to take Xanax to relieve those symptoms but did not warn that the combination could be dangerous or potentially fatal. His family says that advice directly contributed to his death.

The lawsuit argues that ChatGPT went beyond simply providing information and instead presented itself as a reliable source of medical guidance.

“It gave him bad advice, drew him in with the personalization and the persona the chatbot took on,” Angus Scott, Nelson’s stepfather, told Scripps News. “It’s disingenuous.”

RELATED NEWS | Pennsylvania sues Character.AI, claiming bots posed as real doctors

Their legal claim centers on product liability and negligence, arguing OpenAI released a powerful AI tool without adequate safeguards to prevent dangerous health-related recommendations.

It is the latest in a growing wave of lawsuits testing whether AI companies can be held responsible when chatbot-generated advice allegedly causes real-world harm.

Some cases have focused on copyright or misinformation. Others, including this one, are pushing courts to consider whether chatbots are crossing into areas traditionally reserved for licensed professionals, such as medicine or mental health counseling.

In a statement to Scripps News, OpenAI called Nelson’s situation “heartbreaking,” noting that the version of ChatGPT Sam used is no longer available, and that ChatGPT’s initial prompt response was “I’m sorry, but I cannot provide information or guidance on substance abuse,” before additional prompts that ultimately did result in such.

“ChatGPT is not a substitute for medical or mental health care,” OpenAI spokesperson Drew Pusateri wrote in part. “We have continued to strengthen how it responds in sensitive situations with input from mental health experts.”

The company says newer safeguards have since been designed to identify certain high-risk prompts and direct users toward professional help instead of providing detailed advice.

RELATED STORY | OpenAI launches ChatGPT Health, a platform for managing health data and questions

Earlier this year, OpenAI also launched ChatGPT Health, a health-focused initiative the company says was developed in collaboration with physicians and designed to help users seek information more safely while protecting their privacy.

But Sam’s family says that expansion only deepens their concerns.

“Part of the problem I have with hearing when they say, ‘We’ve made it safer’ or ‘We’re continuing to improve our product,’ is that the company also said the 4o model was safe,” Turner-Scott said. “The risk otherwise is death.”

As millions of people increasingly turn to AI chatbots for help with everything from homework to medical questions, regulators and courts are struggling to keep pace with rapidly evolving technology.

For Sam’s family, the goal of the lawsuit is not only accountability, but preventing other families from facing the same loss by enacting more oversight.

“They don’t want to regulate it because that’s going to slow things down. And then there’s no money in that,” Scott said. “It’s people’s lives and people’s health. This is something that could’ve happened to anybody. It’s not a one-off, or just at-risk youth. It’s honor roll kids.”